The Surprising Reality of Families Living With Generative AI

For all the debate about artificial intelligence in schools, workplaces and governments, one of the most important battlegrounds may already be decided.

The family home.

A recent study exploring how parents and children actually use generative AI together reveals a reality that challenges almost every assumption currently shaping education policy and public discussion. Families are not simply banning AI. Nor are children secretly using it unchecked. Instead, households are negotiating with it. They are experimenting, learning alongside their children, and quietly redefining what digital parenting looks like in the age of conversational machines.

Surprise One: Parents Often Trust AI More Than Social Media

Many parents described ChatGPT as safer than platforms such as YouTube or TikTok.

The reason was simple.

There are no strangers involved.

Without visible social interaction, parents perceived fewer risks around grooming or online harassment. AI felt closer to a digital encyclopaedia than a social network.

But this confidence introduces a new danger.

Unlike search engines that clearly link answers to sources, generative AI delivers polished responses without visible authorship. Children may interpret confident explanations as factual authority.

For educators, this represents a major shift. Verification skills are no longer optional. Students may increasingly arrive in classrooms believing AI answers are already correct.

Surprise Two: AI Is Becoming a Shared Family Activity

Public discussion often assumes AI encourages isolation or shortcut learning.

The study suggests the opposite can also be true.

Families reported using ChatGPT together to:

- co-write stories,

- explain homework concepts,

- settle debates,

- brainstorm ideas,

- play collaborative games.

In some homes, AI even acted as a third participant in activities that normally required more players.

Rather than replacing interaction, AI sometimes increased conversation between parents and children. Joint exploration allowed parents to observe how their children thought, questioned and reasoned.

This mirrors earlier research on shared television or gaming experiences, suggesting generative AI may become a new form of family co-learning.

Surprise Three: Parents Are Improvising Without Tools

Parents were far from passive observers.

Many introduced rules around responsible use, accuracy checking and academic honesty. Some monitored prompts or reviewed chat histories.

Yet almost none relied on technical safeguards.

Not because they did not care.

Because meaningful parental controls barely exist.

Unlike streaming platforms or gaming consoles, most generative AI systems offer limited family account structures or child-specific safety settings. Families relied heavily on conversation and trust rather than technology.

This leaves schools in an unexpected position.

Teachers may increasingly become the primary source of AI literacy not just for students, but indirectly for parents as well.

What Families Are Actually Worried About

Academic cheating featured prominently, but it was rarely the only concern.

Parents worried about:

- misinformation delivered confidently,

- reduced creativity through over-reliance,

- privacy and data collection,

- intellectual property ownership,

- ethical decision-making.

Interestingly, some fears focused on long-term societal impacts rather than immediate risks, reflecting uncertainty about how powerful conversational AI may become.

Educators and academically focused parents were often the most cautious, particularly around originality and independent thinking.

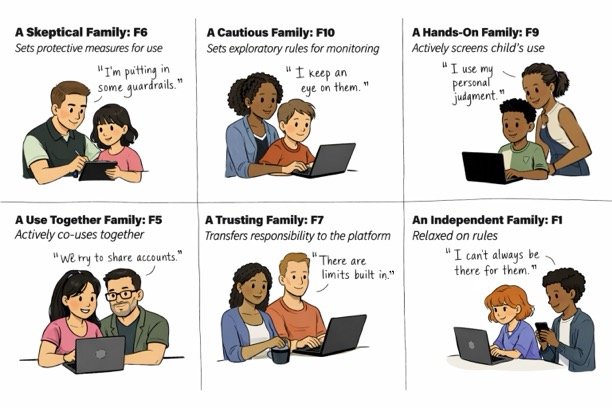

The Six Types of AI Families

One of the most useful contributions of the research is a framework describing six different family approaches to AI use.

Skeptical Families

Strong restrictions or avoidance strategies dominated. AI was viewed primarily as a risk.

Cautious Families

Parents allowed experimentation but monitored closely while learning alongside their children.

Hands-On Families

Active guidance defined use. Parents encouraged exploration combined with ongoing discussion about ethics and accuracy.

Use-Together Families

AI became a shared activity used collaboratively for learning or creativity.

Trusting Families

Parents relied largely on platform safeguards and assumed systems would prevent harm.

Independent Families

Children managed their own usage with minimal intervention, often supported by strong communication norms at home.

These profiles varied across three main dimensions:

- parental control,

- trust in AI safeguards,

- frequency of shared use.

No single model proved dominant.

Family culture, professional background and technological confidence strongly influenced decisions.

How Parents Are Mediating AI

Across households, four familiar parenting strategies appeared.

- Instructive mediation involved discussing ethics, bias or verifying information. Restrictive mediation limited when or how AI could be used.

- Supervision meant checking prompts or shared accounts.

- Co-use involved parents actively participating alongside children.

- Co-use emerged far more frequently than expected.

Key Takeaways

- Families are already integrating AI into learning and everyday life.

- Many parents trust AI more than social media, potentially underestimating misinformation risks.

- Co-use is common. AI is often explored together rather than secretly.

- Parents lack meaningful technical safeguards and rely heavily on conversation-based rules.

- Creativity loss and misinformation worry families as much as cheating.

- Students may increasingly treat AI responses as authoritative unless explicitly taught verification skills.

See original research from University of Wisconsin-Madison